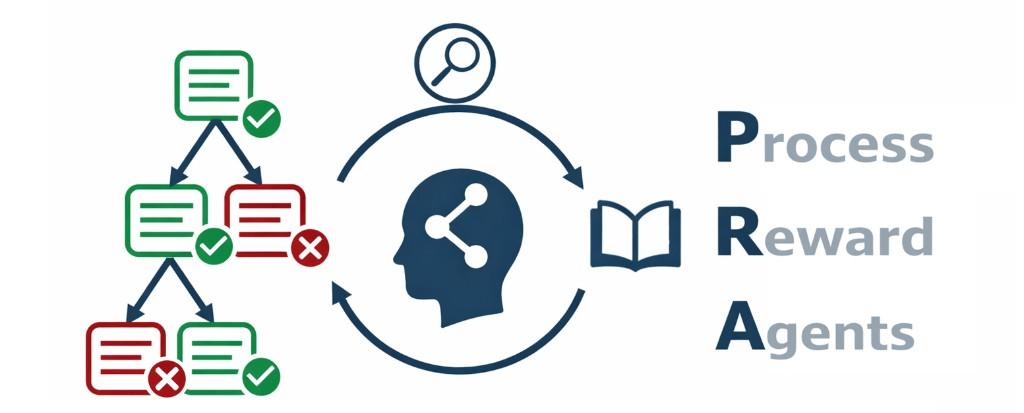

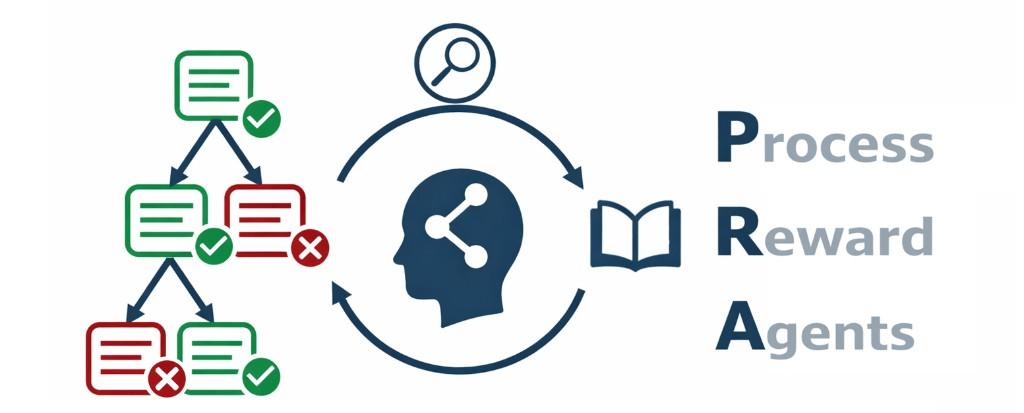

Process Reward Agents for Steering Knowledge-Intensive Reasoning

Reasoning in knowledge-intensive domains remains challenging as intermediate steps are often not locally verifiable: unlike math or code, evaluating step correctness may require synthesizing clues across large external knowledge sources. As a result, subtle errors can propagate through reasoning traces, potentially never to be detected. Prior work has proposed process reward models (PRMs), including retrieval-augmented variants, but these methods operate post hoc, scoring completed trajectories, which prevents their integration into dynamic inference procedures. Here, we introduce Process Reward Agents (PRA), a test-time method for providing domain-grounded, online, step-wise rewards to a frozen policy. In contrast to prior retrieval-augmented PRMs, PRA enables search-based decoding to rank and prune candidate trajectories at every generation step. Experiments on multiple medical reasoning benchmarks demonstrate that PRA consistently outperforms strong baselines, achieving 80.8% accuracy on MedQA with Qwen3-4B, a new state of the art at the 4B scale. Importantly, PRA generalizes to unseen frozen policy models ranging from 0.5B to 8B parameters, improving their accuracy by up to 25.7% without any policy model updates.

@article{sohn2026processrewardagents,

title={Process Reward Agents for Steering Knowledge-Intensive Reasoning},

author={Jiwoong Sohn and Tomasz Sternal and Kenneth Styppa and Torsten Hoefler and Michael Moor},

author+an = {1=first; 2=first; 3=first; 4=last; 5=last},

year={2026},

url={https://process-reward-agents.github.io/}

}